Part 3: Implementing Model Context Protocol (MCP) | The "USB-C" of AI

I have a Bachelor's degree in computer science from University of Delhi and I like to work on small open source projects from time to time.

Welcome back. If you are reading this, you probably saw "Model Context Protocol" in the title and thought, "Oh cool, the shiny new thing!"

I have a confession to make: The title of this post is a bit of a lie.

We are not building a full-blown "MCP Server" for our Arduino today. We are building Tools that our agent can execute.

"Wait," I hear you ask, "Aren't those the same thing?"

Technically, yes. Culturally? No. Most people don't distinguish between the two, and it causes a lot of headaches.

MCP (Model Context Protocol) is designed for interoperability. It is for connecting other people's services to your AI. If you want to connect a Google Drive MCP or a Slack MCP to your agent, that’s great. But building a full standalone MCP server just to run a few Python functions on your own laptop? That is like bringing a broadsword to butter your toast. It works, sure, but you look ridiculous doing it, and you might cut the table.

For our Arduino agent, we will primarily use Tool Decorators. They are simpler, they run in the same process, and they give us fine-grained control (which we need for the safety permissions in Part 6).

However, if you did want to let your friend in another country control your Arduino over the internet, or if you wanted to publish your "Arduino Controller" as a package for the world to use, then you would wrap it in a formal MCP Server.

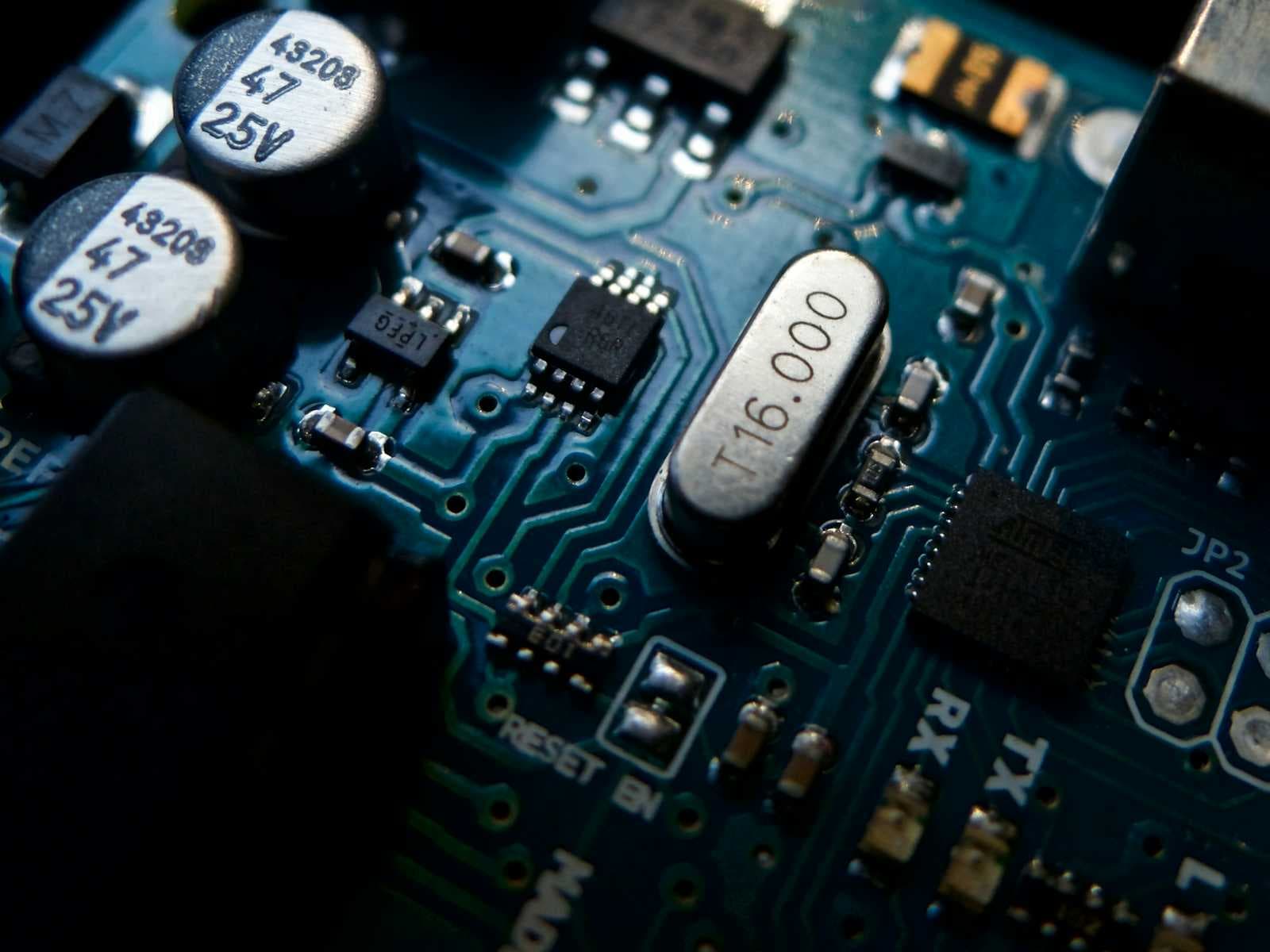

Understanding MCP

Although we are taking the "lazy efficient" route for our internal code, we will still follow the MCP standard.

Why? Because **hardcoding function calling is unscalable.

**In the old days (last year), if you wanted an LLM to search the web, you wrote a specific function and manually shoved the JSON schema into the prompt. If you changed one argument, everything broke.

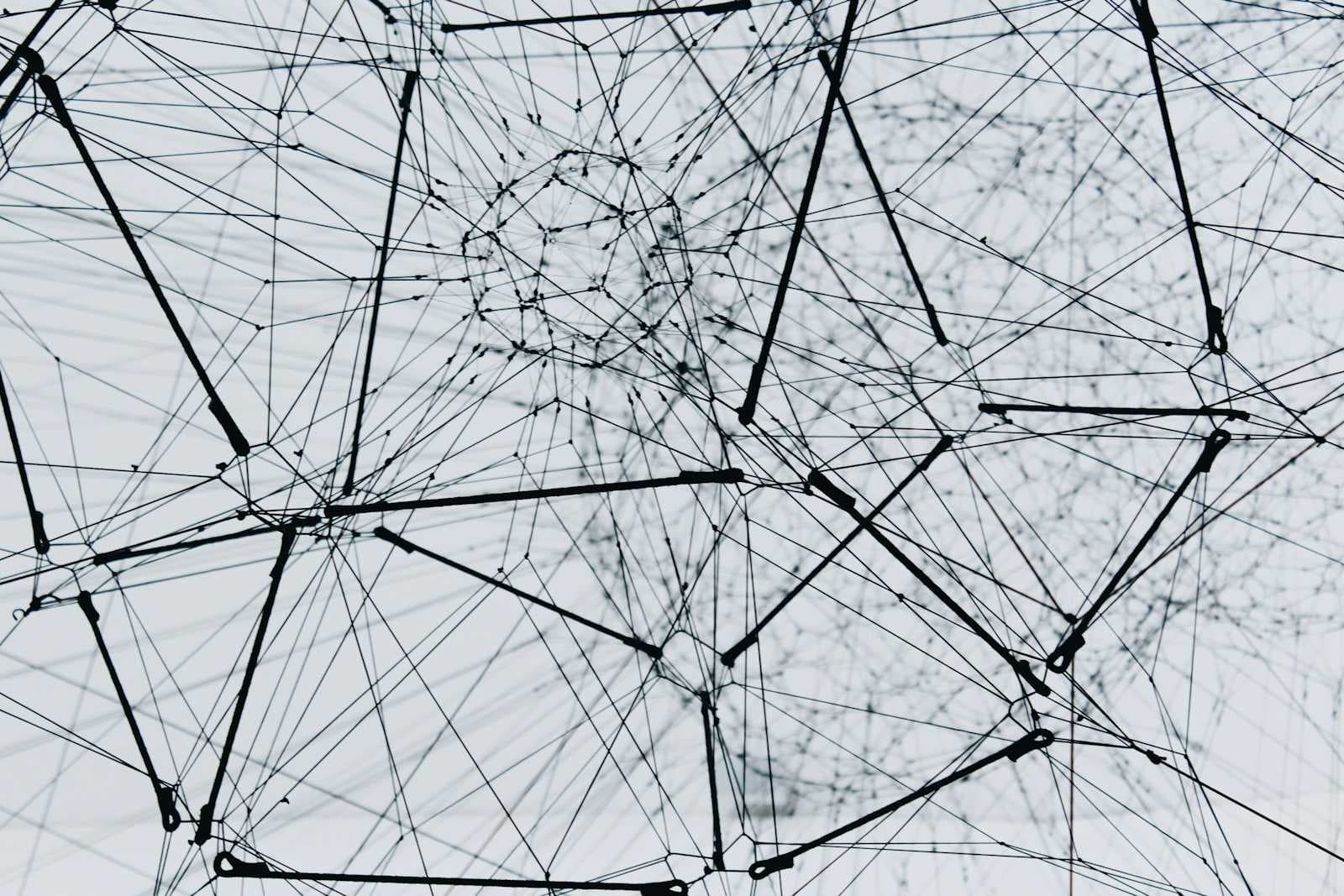

MCP is the "USB-C" for AI tools. It standardizes how an LLM asks, "What can I do?" and "How do I do it?"

The best news? MCP is now part of the Linux Foundation. It isn't just an Anthropic experiment anymore; it is an open standard. It is here to stay. We can bet the farm on it.

Python Tools vs. Full MCP Servers

It is easy to get confused by the terminology, so let's clear the air. Should you wrap your code in a fancy MCP server, or should you just write a Python function and slap a decorator on it?

| Feature | Local Tools | MCP Servers |

| What is it? | A standard Python function decorated with @tool. It runs directly inside your agent's process. | A standalone service that speaks the Model Context Protocol (JSON-RPC) over stdio or HTTP. |

| Complexity | Low. You just write code. No extra servers, no networking logic. | Medium/High. Requires setting up a server, defining resources, and managing a transport layer. |

| Performance | Instant. It shares the same memory space as your agent. Zero network latency. | Slight Latency. Data has to be serialized to JSON, sent over the wire (or pipe), and deserialized back. |

| When to Use | When you are building a specific, private agent that controls your own hardware or local files. If only you need it, keep it local. | When you want to share a tool with the world, or connect to a third-party service (like Google Drive, Slack, or a remote database). |

| Interoperability | Zero. This tool only works within your specific Python script. | Universal. Any MCP-compliant client (Claude Desktop, your agent, or a friend's IDE) can use it instantly. |

| Debugging | Easy. Just use print() statements or standard breakpoints in your IDE. | Tricky. You need the MCP Inspector to see what is happening across the connection boundary. |

| Analogy | Like cooking dinner in your own kitchen. Fast, messy, and exactly how you like it. | Like ordering from a restaurant API. Standardized menu, consistent delivery, but more overhead. |

Since we are hacking together a custom Arduino controller that is physically plugged into our specific USB port, we are sticking with Local Tools. It’s faster and easier to debug.

Defining Our "Local" Tools

For our project, we need a way to control the Arduino via the API we built in Part 2. In this part, we are going to write Python wrappers. These wrappers define what the LLM sees.

I’ve written the code for you here:

Let’s look at how this works. We are taking the API endpoints we created in Part 2 and wrapping them in functions with very descriptive docstrings.

Why are the docstrings important when defining tools for LLMs?

Because the LLM doesn't read your code; it reads your documentation. If your docstring says "Blinks LED," the AI might struggle. If it says, "Triggers the LED array to blink. Requires a 'color' argument (red, green, blue) and a 'times' argument for repetition," the AI knows exactly what to do.

We use the @tool decorator from LangChain/LangGraph to magically convert these Python functions into the JSON schemas the LLM expects.

Adding "Real" Third-Party MCP Tools

Now, let's say you do want to add some external superpowers to your agent. Maybe you want your Arduino agent to be able to do complex math or check the weather before deciding to flash the lights.

You don't need to write those tools from scratch. You can just plug in an existing MCP server.

We use the langchain-mcp-adapters library to do this. It acts as a universal translator.

Here is how you plug a standard MCP server into your LangGraph agent:

See the difference?

Internal logic (Arduino): We write local Python functions decorated as tools.

External capabilities (Math, Search, Filesystem): We use MultiServerMCPClient to pull them in from the ecosystem.

Trust, but Verify using the MCP Inspector

Building tools for an AI agent can often feel like coding in the dark. You might define what you believe is a perfect function, pass it to the Large Language Model (LLM), and then watch in frustration as the model hallucinates non-existent arguments or fails to execute the command.

This is where the MCP Inspector comes in handy. It is difficult to understand exactly what is happening under the hood if you cannot see the data structures involved. The Inspector solves this by allowing you to load your MCP server into a visual interface where you can manually test every tool you have created. It displays the exact JSON schema that the LLM sees, allowing you to confirm that your data types and descriptions are accurate.

For example, if you are building a generic search tool. Using the Inspector, you can manually input a query string and execute the function just as the AI would. You can then examine the raw JSON output to ensure the data is formatted correctly. If the tool fails or returns unexpected data in this controlled environment, you know the error lies in your code rather than the AI's reasoning capabilities.

The rule of thumb is simple: If you cannot get the correct output using the Inspector's GUI, the LLM will definitely not be able to do it via chat. If it breaks in the Inspector, you must fix your code.

In the next part of this series, we will take these tools and hand them over to a Supervisor Agent. This is where the magic happens, as we enable the system to intelligently decide which tool to use for any given task.